Instagram Rolled Out Teen Accounts. Should We Celebrate?

Rather interesting timing for common sense changes.

Earlier this week, Meta rolled out new, stricter default controls for teens under 16 that will apply to new and existing accounts. These “Teen Accounts” will have “built-in limits on who can contact them and the content they see.”

It’s hard not to notice this release's timing, which coincided with the mark-up of KOSA in the House Energy and Commerce Committee. It included a big Meta release event in New York, endorsements from the National PTA and the American Academy of Pediatrics, and a room full of influencers.

But I still don’t feel like inviting Mark Zuckerberg to my birthday party. I have reasons. But first, let’s talk about what these new features will do. Because even suspiciously-timed child safety measures will protect some kids from harm. And I’ll always cheer for that.

Long Overdue Protections

More details about the changes from Instagram’s announcement:

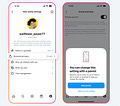

Default private accounts: With default private accounts, teens need to accept new followers, and people who don’t follow them can’t see their content or interact with them. This applies to all teens under 16 (including those already on Instagram and those signing up) and teens under 18 when they sign up for the app.

My add: a significant weakness remains - followers and who teens are following are still visible. These should remain private by default. A follower list is often used against kids who have accepted a friend request from a bad actor in a sextortion scheme.

Messaging restrictions: Teens will have the strictest messaging settings, so they can only be contacted by people they follow or are already connected to.

Sensitive content restrictions: Teens will automatically be placed into the most restrictive sensitive content control setting, which limits the type of sensitive content (such as content that shows people fighting or promotes cosmetic procedures) teens see in places like Explore and Reels.

My add: PYE followers are waiting to see if this ventures into censoring content.

Limited interactions: Teens can only be tagged or mentioned by people they follow. Instagram will also automatically turn on the most restrictive version of their anti-bullying feature, Hidden Words so that offensive words and phrases will be filtered out of teens’ comments and DM requests.

Time limit reminders: Teens will get notifications telling them to leave the app after 60 minutes each day. (Note: parents will have the option to fully block their teen’s access to Instagram after the time limit has been reached.)

Sleep mode enabled: Sleep mode will be turned on between 10 PM and 7 AM, which will mute notifications overnight and send auto-replies to DMs. (Note: parents also will have the option to fully block their teen’s access to Instagram during these hours.)

Parental Supervision - This is New

Now parents can connect their Instagram accounts to their teen’s account. This “family center” like approach is similar to Snapchat.

Teens under 16 will need their parent’s permission to use less protective settings. Teens ages 16-17 will have these features “on” by default (which is great), but can toggle them off without parental permission.

According to Meta’s announcement, to get permission to change, teens <16 have to set up parental supervision on Instagram. If parents want more oversight over their older teen’s (16+) experiences, teens must invite parental supervision. Then, parents can approve any changes to these settings, irrespective of their teen’s age.

Once supervision is established, parents can approve and deny their teens’ requests to change settings or allow teens to manage their settings themselves. Soon, parents will also be able to change these settings directly to be more protective.

Get insights into who their teens are chatting with: While parents can’t read their teen’s messages, now they will be able to see who their teen has messaged in the past seven days. Just like in Snapchat.

Set total daily time limits for teens’ Instagram usage: Parents can decide how much time their teen can spend on Instagram each day. Once a teen hits that limit, they’ll no longer be able to access the app.

Block teens from using Instagram for specific time periods: Parents can choose to block their teens from using Instagram at night, or for specific time periods, with one easy button.

See topics your teen is looking at: Parents can view the age-appropriate topics their teen has chosen to see content from, based on their interests.

What if Kids Lie About Their Age?

Meta’s response: “Teens may lie about their age to circumvent these new protections. That’s why we’re requiring teens to verify their age in new ways. For example, if they attempt to create a new account with an adult birthday, we will require them to verify their age in order to use the account. We’re also building new technology to find teens that have lied about their age to automatically place them in protected settings.”

In other words, Meta is assuming everyone creating a new account is a child unless they prove otherwise with an ID or video selfie (this article explains how the video/selfie option works). That’s good.

I suspect kids will try to beat this by creating accounts with an age 16 birthday, which keeps the default controls off. If Meta wanted to go one step further, anyone claiming to be a child (under age 18) should have an adult verify the accuracy of their birthday to prevent this “hack.” This could be done by requiring a small credit card transaction from a parent card (already a very easy, common practice).

Meta will also implement AI to identify accounts that it suspects are being used by kids under age 16. If the algorithm suspects that the user is underage, the user will be required to use the video selfie process to prove otherwise.

Meta, like other apps, believes age verification should happen at the device or App Store level. Not on every app. We actually agree with Meta on this point and you’ll hear more from us about this idea soon.

So, Where’s the Cake and Ice Cream?

I typed this draft long after I should have been in bed. Because as I told Andrea (my wife) last night, I felt unsettled. I was rattled by videos I saw of a Christian influencer at the Meta announcement event in New York. She was all smiles, making Reels, and recording podcast episodes with the Meta executive responsible for this change.

Does she know how many kids have died while using Instagram?

Has she watched interviews about the evidence Meta (Mark Zuckerberg specifically) ignored about harms to children?

Has she ever faced off against big tech lobbyists, their lies, tactics, and deceptions?

Forgive my cynicism. But I don’t trust Meta. There’s something insidious about what happened here. Instagram is 14 years old. But with KOSA news imminent, they did it this week. During those 14 years, how many thousands of children have been harmed, abused, exploited, trafficked, sexualized, or are dead because of Instagram?

I speak with thousands of teens across the country, sharing sextortion stories like Jordan DeMay from Michigan. Simple optical character recognition technology that has existed for decades could have kept Jordan alive. But Instagram doesn’t use it.

The child exploitation problem is decades old. Why don’t we have sophisticated, mandatory, automatic child safety measures required on everything that could be accessed by children?

One person pushed back on my cynicism with this:

National PTA’s endorsement doesn’t convince me of much. They receive funding from Meta, YouTube, Discord, TikTok, Google, and AT&T. Their endorsement is 100% PR-driven and conflicted. I know Meta has a teen advisory board. Snapchat does, too. That doesn’t mean they’re designing for teen thriving. They’re asking teens to help them make a destructive product a little less destructive to give us the impression of care.

(Michael Salter explores big tech’s insincerity with this brilliant illustration)

Let me say it again - these are good changes that will help many kids. But I don’t applaud Meta. Because when I step back, here’s what I saw this week.

I saw us celebrating a decision to show less explicit content to seventh graders, disallow strangers from talking to seventh graders, and encourage seventh graders to get enough sleep. Wait, Instagram, why did that take 14 years? And how did you convince us to celebrate slightly less negligence toward children? It almost feels like gaslighting.

Going to that Meta release party in New York kinda feels like going to a party to celebrate a version of Roundup that causes less cancer.

Forgive me for not celebrating. Instead, I’ll pray for John and Jennifer DeMay who are missing their son. I’ll think about Molly Russell whose death by suicide in 2017 sparked outrage but was only a blip on Instagram’s radar. Then, I’ll craft a few posts that help our followers contact their representatives about bringing a strong version of KOSA to the House floor for a vote. As Catherine Price says, “Let’s get this thing passed.”

Because Instagram still isn’t healthy for kids. #delayistheway. The very best things for our children are still almost always analog.